Fresh on the arXiv, a nice new paper by Lie Qian proving potential automorphy results for ordinary Galois representations

\(\rho: G_F \rightarrow \mathrm{GL}_n(\mathbf{Q}_p)\)

of regular weight \([0,1,\ldots,n-1]\) for arbitrary CM fields \(F\). The key step in light of the 10-author paper is to construct suitable auxiliary compatible families of Galois representations for which:

- The mod-\(p\) representation coincides with the one coming from \(\rho\),

- The compatible family can itself be shown to be potentially automorphic.

The main result then follows by an application of the p-q switch. Something similar was done by Harris–Shepherd-Barron–Taylor in the self-dual case. They ultimately found the motives inside the Dwork family. Perhaps surprisingly, Qian also finds his motives in the same Dwork family, except now taken from a part of the cohomology which is not self-dual!

This result doesn’t *quite* have immediate implications for the potential modularity of compatible families: If you take a (generically irreducible) compatible family with Hodge-Tate weights \([0,1,\ldots,n-1]\) then one certainly expects (with some assumption on the monodromy group) that the representations are generically ordinary, but this is a notorious open problem even in the analogous case of modular forms of high weight. One way to try to avoid this would be by proving analogous results for non-ordinary representations. But then you run into genuine difficulties trying to find such arbitrary residual representations inside the Dwork family over extensions unramified at \(p\). This difficulty also arises in the self-dual situation, and the ultimate fix in BLGGT was to bypass such questions by applying Khare-Wintenberger lifting style results. However, such lifting results can’t immediately be adapted to the \(l_0 > 0\) situation under discussion here.

On the other hand, I guess one should be OK for very small \(n\): If \(M\) is (say) a rank three motive over \(\mathbf{Q}\) with HT weights \([0,1,2]\), determinant \(\varepsilon^3\), and coefficients in some CM quadratic field \(E\) (you have to allow coefficients since otherwise the motive is automatically self-dual, see here), then one is probably in good shape. For example, the characteristic polynomials of Frobenius are Weil numbers \(\alpha,\beta,\gamma\) of absolute value \(p\) and will have (as noted in the blog post linked to in the previous sentence) the shape

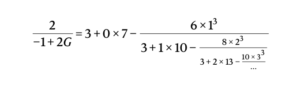

\(X^3 – a_p X^2 + \overline{a_p} p X + p^3,\)

and now for primes \(p\) which split in \(E\), the corresponding v-adic representation will be ordinary for at least one of the \(v|p\) unless \(a_p\) is divisible by \(p\), which by purity forces \(a_p \in \{-3p,-2p,-p,0,p,2p,3p\}\). From the usual arguments, one sees that there is at least one ordinary \(v\) for almost all split primes \(p\). The rest of the Taylor-Wiles hypotheses should also be generically satisfied assuming the monodromy of \(M\) is \(\mathrm{GL}(3)\), potential modularity in any other case surely being more or less easy to handle directly. Hence Qian thus proves such motives are potentially automorphic. A funny thing about this game is that actually finding examples of non-self dual motives is very difficult, but in this case, van Geemen and Top studied a family of such motives \(S_t\) occurring inside \(H^2\) of the surface

\(z^2 = xy(x^2 – 1)(y^2 – 1)(x^2-y^2 + t x y)\)

for varying \(t\) (they note that this family was first considered by Ash and Grayson. Also apologies for changing the notation slightly from the paper, but I prefer to denote the parameter of the base by \(t\)). They then compare their particular motive when \(t=2\) to an explicit non-self dual form for \(\mathrm{GL}(3)/\mathbf{Q}\) of level \(128\). I’m sure by this time (after HLTT and Scholze) someone has verified using the Faltings–Serre method that \(S_2\) is automorphic, but now by Qian’s result we know that the \(S_t\) are potentially automorphic for all \(t\).